Day 58: Performance Optimization & Scaling

What We’re Building Today

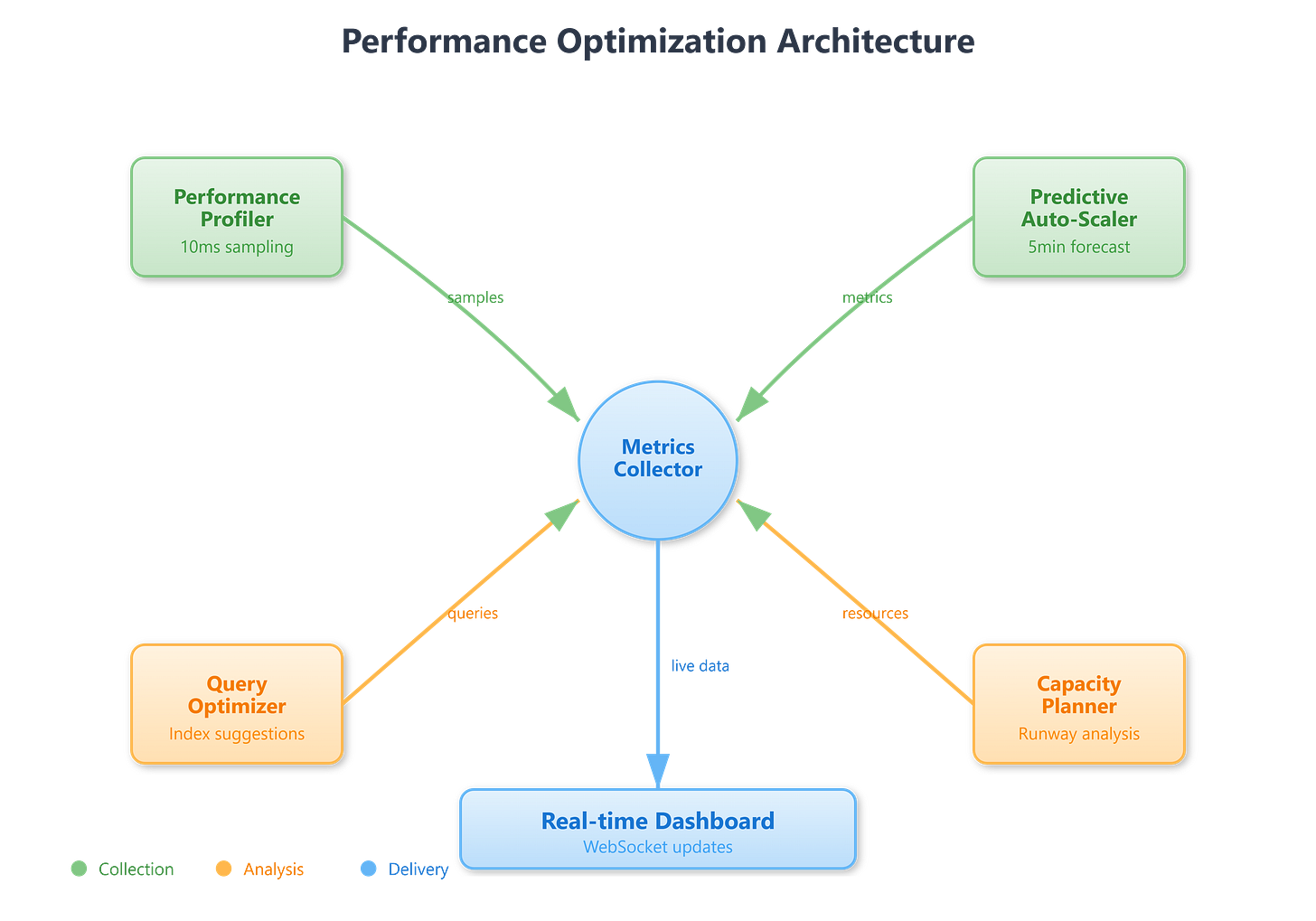

Today we’re building a complete performance optimization system that goes beyond basic monitoring. We’ll create an intelligent performance profiler that analyzes application behavior, a predictive auto-scaler that anticipates load changes before they happen, and a capacity planning engine that models growth scenarios. Think of it as giving your infrastructure a brain that learns from patterns and makes smart decisions about resources.

What You’ll Ship:

Real-time performance profiling dashboard with hotspot detection

Predictive auto-scaling engine using time-series forecasting

Database query optimizer with automatic index recommendations

Load testing framework with realistic traffic patterns

Capacity planning model with growth projections

Core Concepts: Making Systems Fast and Scalable

Performance Optimization Fundamentals

Performance optimization isn’t about making everything faster—it’s about identifying bottlenecks and eliminating them systematically. Netflix discovered that 90% of their latency issues came from just 10% of their code paths. This is the critical insight: measure first, optimize what matters.

The Performance Pyramid:

Profiling: Measure where time is actually spent, not where you think it’s spent

Analysis: Identify patterns—is it CPU, memory, I/O, or network bound?

Optimization: Apply targeted fixes to the actual bottlenecks

Validation: Confirm improvements with metrics, not assumptions

Real-world example: Shopify reduced checkout time from 3.5s to 850ms by profiling and discovering that session deserialization consumed 40% of request time. They didn’t rewrite the entire system—they fixed the one thing that mattered.

Intelligent Auto-Scaling

Traditional auto-scaling is reactive: wait for load to spike, then scramble to add capacity. Predictive auto-scaling looks at historical patterns and adds capacity before the spike hits. Spotify uses this to scale their recommendation services 15 minutes before peak traffic periods, ensuring zero latency degradation during evening listening hours.

The algorithm combines three signals:

Time-series patterns: Daily/weekly traffic rhythms

Event correlation: Marketing campaigns, releases, holidays

Real-time derivatives: Rate of change in current traffic

Database Performance Tuning

Databases become slow for predictable reasons: missing indexes, inefficient queries, lock contention, or poor data distribution. Uber’s query optimizer automatically suggests indexes by analyzing query patterns and identifying sequential scans that touch millions of rows.

Query Optimization Strategy:

Analyze execution plans to find table scans

Identify missing indexes from WHERE/JOIN clauses

Rewrite N+1 queries into batch operations

Implement query result caching for repeated patterns

Capacity Planning Mathematics

Capacity planning answers: “When will we run out of resources?” Amazon’s capacity planning uses Little’s Law: Concurrency = Throughput × Latency. If your system handles 10,000 req/s with 50ms latency, you need capacity for 500 concurrent requests.

Growth modeling uses exponential smoothing: Forecast = α × Actual + (1-α) × Previous_Forecast, where α (0.1-0.3) balances responsiveness vs stability.