Day 57: Hyperscale Architecture Patterns

Building for 10 Million Requests Per Second

What We’re Building Today

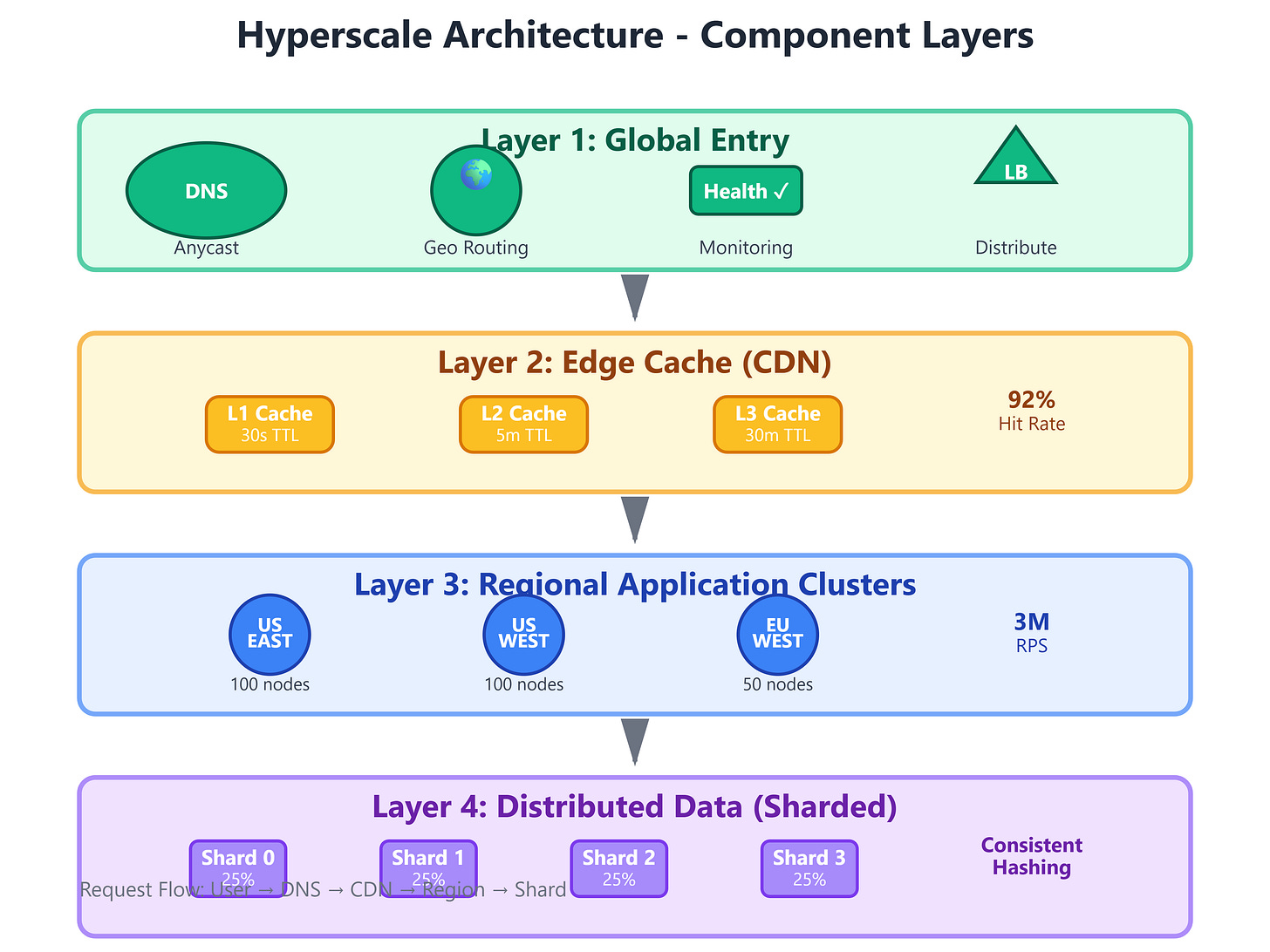

Today we’re architecting a hyperscale system capable of handling 10 million requests per second across multiple geographic regions. We’ll build a complete implementation featuring global load balancing with intelligent geographic routing, multi-region deployment with automatic failover, CDN edge caching, database sharding with consistent hashing, and real-time traffic visualization.

This isn’t a toy example—we’re implementing the same patterns used by Netflix (handling 450 billion events daily), Google (processing 8.5 billion searches daily), and Cloudflare (serving 46 million requests per second at peak).

What You’ll Build:

Global Load Balancer with geographic routing and health-aware distribution

Multi-Region Deployment Manager with automatic failover detection

CDN Edge Cache Layer with intelligent invalidation

Database Sharding Engine using consistent hashing with virtual nodes

Real-Time Traffic Analytics Dashboard showing global request distribution

Core Concepts: Hyperscale Architecture Fundamentals

What Makes Hyperscale Different

When Netflix streams to 260 million subscribers simultaneously, or when Spotify delivers music to 550 million users, they’re not just running bigger servers—they’re employing fundamentally different architectural patterns. Hyperscale systems distribute load geographically, replicate data strategically, and make routing decisions in microseconds. The difference between handling 10,000 requests per second and 10 million isn’t scale—it’s architecture.

Geographic Distribution: Traditional systems run in a single data center. Hyperscale systems deploy across 20+ regions worldwide, routing each request to the closest healthy endpoint. When you watch YouTube in Mumbai, you’re hitting servers in India, not California. This reduces latency from 200ms to 20ms—a 10x improvement that makes streaming feel instantaneous.

Intelligent Caching: CDNs like Cloudflare cache static content at 330+ edge locations. When 100,000 users request the same Netflix thumbnail, only the first request hits origin servers—the rest are served from edge caches within 10ms. This reduces origin load by 95% and dramatically improves user experience.

Data Sharding: Uber doesn’t store all 7 billion trips in a single database—they partition data across thousands of shards using city-based and user-based strategies. Each shard handles 10,000-50,000 requests per second, and shards scale independently based on geographic demand. During New Year’s Eve, they spin up additional shards in high-traffic cities within minutes.